INTRODUCTION

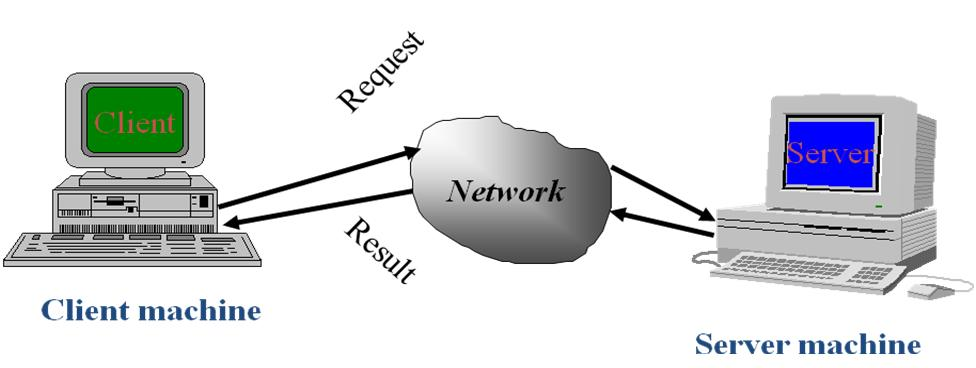

If people remember 51/2 inch floppy disk was enough to store whole operating system itself on it.Now hard disks with GB come along with your computer you buy and we still feel it is less . Well the point is, amount of the data any individual deals with has increased significantly and managing this data is problem. right? If so, definitely we need to build something by which we can control or i mean manage this data,Answer is HADOOP In a traditional non distributed architecture, you’ll have data stored in one server and any client program will access this central data server to retrieve the data. The non distributed model has few basic issues. In this model, you’ll mostly scale vertically by adding more CPU, adding more storage, etc(scaling up) . This architecture is also not reliable, as if the main server fails, you have to go back to the backup to restore the data. From performance point of view, this architecture will not provide the results faster when you are running a query against a huge data set.

In a hadoop distributed architecture, both data and processing are distributed across multiple servers .well, simply you can say Each and every server offers local computation and storage. i.e When you run a query against a large data set, every server in this distributed architecture will be executing the query on its local machine against the local data set. Finally, the result-set from all this local servers are consolidated or In simple terms, instead of running a query on a single server, the query is split across multiple servers, and the results are consolidated. This means that the results of a query on a larger data-set are returned faster. I

Same way the amount of the data has grown so huge terabytes, exabytes and zettabytes that RDBMS is not able to handle the processing of this amount of the data. Big Data is ANSWER.

- Volume: Big like Facebook, Google,yahoo and many other such companies are producing huge amount of data every day. These data needs to be stored, analyzed and processed in order to know about the market, trends, customers

- Variety: Data from different sources in different forms, like binaries,videos, text, images, emails a which is unstructured or semi structured but not like what you have in database tables. so it is quite tough for those systems to handle the quality and quantity .

- Velocity: Executing query in system takes time what if you have population of INDIA to query like "select name from TableIndia where name='shabir' ". Simply it will suck or will take huge time to get name of shabir. . So to analyze the same we have to develop a system that will process the data at much higher speed and with high scalability.

The Hortonworks Data Platform, powered by Apache Hadoop, and 100% open source platform for storing, processing and analyzing large volumes of data. It is designed to deal with data from many sources and formats in a very quick, easy and cost-effective manner. The Hortonworks Data Platform consists of the essential set of Apache Hadoop projects including MapReduce, Hadoop Distributed File System (HDFS), HCatalog, Pig, Hive, HBase, Zookeeper and Ambari. Hortonworks is the major contributor of code and patches to many of these projects.

We usually define Hadoop as Big data and what really Big data is?

Big Data means data sets that are too big to effectively manage in traditional database technologies. Typically this means the datasets are larger than terabytes. So what do I mean by effectively manage? The ability to import the data and query the data based on the requirements of the business logic. These requirements are typically expressed in terms of elapsed time. Simply put there is too much data to store and process in a traditional database.

In practical implementation “Big Data” does not imply anything about the structure of the data. The process of ETL (extract, transform and load) is almost always applied in data warehousing to normalize the data before querying is done. ETL can also be used in Big Data analytic s. So, the structure of the data is a separate dimension, although an important one as the ETL process may be a costly one.

Note also that just because a data set does not satisfy the criteria for Big Data does not mean that the tools of the trade, such as NoSQL and Hadoop, are not relevant for processing it. As I’ll explain later these technologies, although originally developed for Big Data, have much broader usefulness.

1.1. Understand the Basics

The Hortonworks Data Platform consists of 3 layers.

• Core Hadoop: The basic components of Apache Hadoop.

• Hadoop Distributed File System (HDFS): A special purpose file system that is designed to work with the MapReduce engine. It provides high-throughput access to data in a highly distributed environment.

• MapReduce: A framework for performing high volume distributed data processing using the MapReduce programming paradigm.

MapReduce is the key algorithm that the Hadoop MapReduce engine uses to distribute work around a cluster.

• Apache Pig: A platform for creating higher level data flow programs that can be compiled into sequences of MapReduce programs, using Pig Latin, the platform’s native language.

• Apache Hive: A tool for creating higher level SQL-like queries using HiveQL, the tool’s native language, that can be compiled into sequences of MapReduce programs.

• Apache HCatalog: A metadata abstraction layer that insulates users and scripts from how and where data is physically stored.

• WebHCat (Templeton): A component that provides a set of REST-like APIs for HCatalog and related Hadoop components.

• Apache HBase: A distributed, column-oriented database that provides the ability to access and manipulate data randomly in the context of the large blocks that make up HDFS.

• Apache ZooKeeper: A centralized tool for providing services to highly distributed systems. ZooKeeper is necessary for HBase installations.

• Supporting Components: A set of components that allow you to monitor your Hadoop installation and to connect Hadoop with your larger compute environment.

• Apache Oozie:A server based workflow engine optimized for running workflows that execute Hadoop jobs.

• Apache Sqoop: A component that provides a mechanism for moving data between HDFS and external structured data stores. Can be integrated with Oozie workflows.

• Apache Flume: A log aggregator. This component must be installed manually.

• Apache Mahout: A scalable machine learning library that implements several different approaches to machine learning.

• Core Hadoop: The basic components of Apache Hadoop.

• Hadoop Distributed File System (HDFS): A special purpose file system that is designed to work with the MapReduce engine. It provides high-throughput access to data in a highly distributed environment.

• MapReduce: A framework for performing high volume distributed data processing using the MapReduce programming paradigm.

MapReduce is the key algorithm that the Hadoop MapReduce engine uses to distribute work around a cluster.

The Map

A map transform is provided to transform an input data row of key and value to an output key/value:

- map(key1,value) -> list<key2,value2>

That is, for an input it returns a list containing zero or more (key,value) pairs:

- The output can be a different key from the input

- The output can have multiple entries with the same key

The Reduce

A reduce transform is provided to take all values for a specific key, and generate a new list of the reduced output.

reduce(key2, list<value2>) -> list<value3> • Essential Hadoop: A set of Apache components designed to ease

working with Core

Hadoop.• Apache Pig: A platform for creating higher level data flow programs that can be compiled into sequences of MapReduce programs, using Pig Latin, the platform’s native language.

• Apache Hive: A tool for creating higher level SQL-like queries using HiveQL, the tool’s native language, that can be compiled into sequences of MapReduce programs.

• Apache HCatalog: A metadata abstraction layer that insulates users and scripts from how and where data is physically stored.

• WebHCat (Templeton): A component that provides a set of REST-like APIs for HCatalog and related Hadoop components.

• Apache HBase: A distributed, column-oriented database that provides the ability to access and manipulate data randomly in the context of the large blocks that make up HDFS.

• Apache ZooKeeper: A centralized tool for providing services to highly distributed systems. ZooKeeper is necessary for HBase installations.

• Supporting Components: A set of components that allow you to monitor your Hadoop installation and to connect Hadoop with your larger compute environment.

• Apache Oozie:A server based workflow engine optimized for running workflows that execute Hadoop jobs.

• Apache Sqoop: A component that provides a mechanism for moving data between HDFS and external structured data stores. Can be integrated with Oozie workflows.

• Apache Flume: A log aggregator. This component must be installed manually.

• Apache Mahout: A scalable machine learning library that implements several different approaches to machine learning.

Next Question : Will Big Data impact existing BI Infrastructure ?

Each will find its’ appropriate place in the corporate IT .

There are arguments on each side of the question. Hadoop’s free open source run on low cost commodity hardware, and provide virtually infinity storage of structured and unstructured data. However, few organizations have stable, production- ready Hadoop deployments. And the tools and technologies currently available for accessing and analyzing Hadoop data are in early stages of maturity. Fact is that there are still complaints or issues with

- query performance,

- the ability to perform real time analytic s,

- preference of business analysts and developers to leverage existing SQL skills which matters a lot in technology flourish or boom.

Data warehouses represent the established and mature technology, and they aren’t likely or will not easily go away. Nearly all enterprises have data warehouses and marts in place that took time to build/develop, and they are delivering awesome business value. However, data warehouses are not designed to accommodate the increasing volumes of unstructured data from social media like Facebook,twitter.., mobile devices, sensors, medical equipment, industrial machines, and other sources. And there are both economic and performance limitations on the amount of data that can be stored and accessed.

MY OPINION AND CONCLUSION

My opinion is, new developments and innovations that make Hadoop data more accessible, more usable, and more relevant to business users will visualize the distinctions between Hadoop and the traditional data warehouse.Fact is that it is bigger than data ware house and will definitely boom and capture market with time and yes possible gradually will be overtaking data warehousing..

Organizations who have not yet adopted Hadoop may sometime imagine that ALL HADOOP IS DEVELOPERS But fact is that Hadoop belongs to who care about the OS, who understand how hardware choices impact performance, and who understand workload characteristics and how to tune for them.let it be developer, Database Admin or System Admin but more chances are for DBA Admins.

INTERVIEW QUESTIONS FROM edureka

Useful links:

- MapReduce concept: of course you have original google paper or the map reduce tutorial of the hadoop website or the cloudera training sessions. But I would also recommend more funky introductions like the example described in this section of the excellent introduction to information retrieval (a must read book for search engineers) or this very nice post by Joel Spolsky.

- Around HDFS: description of the HDFS architecture from the hadoop website. Since HDFS design is inspired by the Google file system, it may worth to read about it here (original Google paper) or here (a nice vulgarization of the last one).

- Related projects: Mahout (I described here my experience using Mahout Taste for a startup project), Pig (and a nice tutorial by Yahoo on the cloudera website), HBase (an open source implementation of Google BigTable), and many others that you can find by starting from the hadoop website.

MICROSOFT BIG DATA :Take a learning machine and learn

Starts here nextIf you want to explore Microsoft Hadoop Implementation ,just go

Explore

What's new in the Hadoop cluster versions provided by HDInsight?

Get started using Hadoop in HDInsight

Introduction to Hadoop in HDInsight

HDInsight HBase overview

Get started using HBase with Hadoop in HDInsight

Run the Hadoop samples in HDInsight

Get started with the HDInsight Emulator

Develop

Develop Java MapReduce programs for Hadoop in HDInsight

Develop C# Hadoop streaming programs for HDInsight

Use Maven to build Java applications that use HBase with Hadoop

HDInsight SDK Reference Documentation

Debug Hadoop in HDInsight: Interpret error messages

Submit Hadoop jobs in HDInsight

Use Hadoop MapReduce in HDInsight

Use Hive with Hadoop in HDInsight

Use Pig with Hadoop in HDInsight

Use Python with Hive and Pig in HDInsight

Move data with Sqoop between a Hadoop cluster and a database

Use Oozie with Hadoop in HDInsight

Serialize data with the Microsoft .NET Library for Avro

Analyze

Generate movie recommendations with Mahout

Perform graph analysis with Giraph using Hadoop

Analyze Twitter data with Hadoop in HDInsight

Analyze real-time Twitter sentiment with HBase

Analyze flight delay data using Hadoop in HDInsight

Connect Excel to Hadoop with the Microsoft Hive ODBC Driver

Connect Excel to Hadoop with Power Query

Manage

Availability and reliability of Hadoop clusters in HDInsight

Manage Hadoop clusters in HDInsight using Management Portal

Manage Hadoop clusters in HDInsight using PowerShell

Manage Hadoop clusters in HDInsight using the command-line interface

Monitor Hadoop clusters in HDInsight using the Ambari API

Use time-based Oozie coordinator with Hadoop in HDInsight

Provision Hadoop clusters in HDInsight

Provision HBase clusters on Azure Virtual Network

Upload data for Hadoop jobs in HDInsight

Use Azure Blob storage with Hadoop in HDInsight

TRAINING RESOURCES

HADOOP LEARNINGVM for learning purposes. Good ones are Cloudera's VM and OpenSolaris live Hadoop.

Further:

Looking for more resources on Microsoft Hadoop Implementation?

Forums

Ask questions, share insights and discuss the platform

ReferenceHD

Insight SDK Documentation

Downloads

Azure PowerShell Cmdlets

Hadoop

Why choose Hadoop on Azure?

Ask questions, share insights and discuss the platform

ReferenceHD

Insight SDK Documentation

Downloads

Azure PowerShell Cmdlets

Hadoop

Why choose Hadoop on Azure?

hope this helps you. do ask?

Shabir Hakim